You open Netflix seeking entertainment, and within seconds, the interface presents a grid of suggestions that feel uncannily tailored to your preferences. You launch Spotify, and an algorithmically curated playlist introduces you to artists you’ve never heard of but immediately appreciate. You browse Amazon, and the site anticipates purchases you hadn’t consciously considered but find yourself wanting. These experiences share a common architecture: recommendation engines that appear to read minds through mathematical prediction rather than telepathy.

The phenomenon represents one of artificial intelligence’s most commercially successful applications. These systems analyze behavioral data—what you watch, listen to, purchase, or ignore—to predict what content or products you might value next. The underlying challenge they address is information overload. Netflix hosts thousands of titles; Spotify contains millions of tracks; Amazon lists hundreds of millions of products. Without algorithmic curation, users would face paralysis by choice or, more likely, abandon their search entirely. Netflix research indicates users typically allocate only 60 to 90 seconds to finding content before giving up—a constraint that makes effective recommendation not merely convenient but economically essential.

This article examines five leading recommendation engines, analyzing the technical mechanisms underlying their predictions and the design decisions shaping user experience. The examples span entertainment (Netflix, Spotify, YouTube), commerce (Amazon), and social platforms (Facebook, Twitter). Beyond cataloging these systems, we’ll explore why technical sophistication alone proves insufficient—why the best AI recommendations require thoughtful user experience design that builds trust, provides control, and respects the boundaries between helpful personalization and intrusive surveillance.

For product designers, engineers, and strategists working on personalized systems, understanding these implementations reveals both what’s technically possible and what proves commercially viable. The gap between these categories remains significant and instructive.

How Machine Learning Powers Modern Discovery

Recommendation engines represent applied machine learning—the subset of artificial intelligence focused on systems that improve performance through experience rather than explicit programming. Traditional software follows deterministic rules: given input X, produce output Y. Machine learning systems instead identify statistical patterns in data and use those patterns to make predictions about new, unseen cases.

The evolution from simple rule-based recommendations (“users who bought X also bought Y”) to contemporary systems reflects broader progress in machine learning capability. Early recommendation approaches relied on collaborative filtering—finding users with similar historical preferences and recommending items those similar users enjoyed. This method works reasonably well but encounters limitations. It struggles with new users who have limited history (the “cold start problem”) and cannot account for content attributes that might predict preferences independent of user behavior.

Neural networks address these limitations through architectural sophistication. Loosely inspired by biological neural systems, these computational structures consist of interconnected nodes arranged in layers. Information passes through the network, with each connection weighted according to its predictive importance. During training, the network adjusts these weights to minimize prediction errors across thousands or millions of examples. The result is a system that learns abstract patterns—combinations of features that predict outcomes—without requiring explicit human specification of what patterns matter.

The terminology deserves clarification. “Deep learning” refers specifically to neural networks with many layers, allowing them to learn hierarchical representations of increasing abstraction. Early layers might detect simple features (in images: edges, colors; in audio: frequencies, rhythms), while later layers combine these into more complex patterns (objects, faces; melodies, genres). This hierarchical learning proves particularly valuable for recommendation systems processing rich media like video or music, where relevant features exist at multiple levels of abstraction.

Contemporary recommendation engines increasingly employ deep learning architectures because they can integrate diverse data types—user demographics, behavioral history, content attributes, contextual information—into unified predictive models. A system might simultaneously consider what you watched, when you watched it, what device you used, what you skipped, how quickly you finished series, and what content features characterize your preferred titles. Traditional approaches would struggle to integrate this heterogeneity; neural networks handle it naturally through their flexibility in learning complex, nonlinear relationships.

The shift toward deep learning also reflects a philosophical transition: from systems requiring extensive human feature engineering (specifying which variables matter and how) toward systems that discover relevant patterns autonomously. This reduces development time and potentially identifies predictive patterns humans would not recognize consciously. However, it introduces interpretability challenges—a tension we’ll return to when discussing user experience implications.

Netflix: The Master of “Taste Groups”

Netflix’s recommendation system represents perhaps the most commercially consequential application of machine learning to content discovery. The company estimates its recommendation engine saves over $1 billion annually through improved customer retention—a figure derived from preventing subscription cancellations by ensuring users consistently find content they value. Approximately 80% of viewing on Netflix originates from recommendations rather than direct search, indicating the system’s dominance in shaping user behavior.

The technical evolution of Netflix’s approach illustrates broader industry trends. Early implementations used relatively simple collaborative filtering: identifying users with similar viewing histories and recommending titles those similar users enjoyed. This worked adequately but struggled with Netflix’s expanding catalog and increasingly diverse subscriber base. The system’s predictions became less accurate as the ratio of available content to user viewing history grew.

The contemporary Netflix system employs neural networks trained on multiple data streams. The system tracks not merely what you watch, but how you watch it: completion rates for individual titles, viewing speed (do you binge or spread viewing across days?), abandonment points (which episodes cause viewers to quit series?), replay behavior, and device preferences. These behavioral signals provide richer information than simple binary ratings (liked/didn’t like) because they capture engagement intensity and viewing context.

Thousands of Taste Groups

Netflix segments its user base into thousands of distinct “taste groups”—clusters of subscribers with similar preference patterns. This segmentation occurs through unsupervised machine learning algorithms that identify natural groupings in high-dimensional preference space. You belong to multiple overlapping taste groups simultaneously: perhaps one defined by preference for cerebral science fiction, another by tolerance for slow-paced narrative, another by preference for non-English content.

This clustering approach solves several problems. It addresses the cold start challenge for new users by quickly classifying them into preliminary taste groups based on minimal data, then refining classification as viewing history accumulates. It handles the scalability challenge of comparing each user against millions of others by reducing the comparison space to manageable taste group representatives. And it captures the reality that preferences are multidimensional—your enjoyment of action films doesn’t necessarily predict your documentary preferences.

The taste group assignments combine behavioral data with content metadata. Netflix employs human taggers who watch content and assign detailed codes describing attributes: narrative complexity, violence level, emotional tone, visual style, pacing, thematic content. These human-generated features complement algorithmically extracted features (viewing patterns, completion rates) to create rich content representations. The system learns which content attributes predict satisfaction for which taste groups, enabling recommendations even for newly added titles with limited viewing history.

However, this sophistication introduces opacity. Netflix cannot typically explain why it recommended a specific title beyond vague genre alignment. The neural network’s internal representations—the abstract patterns it learned to predict viewing satisfaction—don’t translate into human-comprehensible rationales. This “black box” quality creates user experience challenges we’ll explore in depth later.

The business impact extends beyond retention metrics. Recommendation influences production decisions. Netflix commissions content partially based on predicted appeal to underserved taste groups—identifying audience segments whose preferences are inadequately addressed by existing catalogs. This feedback loop from recommendation to content creation represents a distinctive strategic advantage unavailable to traditional studios lacking comparable user data and predictive infrastructure.

Spotify: The Three-Pronged Discovery Model

Spotify’s recommendation architecture exemplifies how combining multiple algorithmic approaches can produce superior results to any single method. The service’s “Discover Weekly” playlist, updated every Monday with personalized music recommendations, has become a signature feature driving user engagement. The system behind these recommendations integrates three distinct machine learning approaches, each addressing different aspects of the music recommendation challenge.

Matrix Analysis and Playlist Pairings

The first component employs collaborative filtering through matrix factorization—a mathematical technique that decomposes the user-track interaction matrix (who listened to what) into latent factors representing abstract preference dimensions. This approach identifies users with similar listening patterns and recommends tracks those similar users enjoy.

Spotify enhances basic collaborative filtering by analyzing playlist co-occurrence: which tracks appear together on user-created playlists. This signal provides richer information than individual listening history because playlists represent intentional curation decisions. When many users place two songs on the same playlist, this suggests meaningful similarity beyond mere popularity. The system learns that these tracks share some quality that listeners value, even if that quality isn’t explicitly labeled.

This method works well for popular content with substantial listening history but struggles with new releases and obscure tracks lacking sufficient user interaction data. The collaborative approach also cannot explain why tracks are similar—it knows only that listeners treat them similarly.

Audio Attributes and Natural Language Processing

The second component addresses these limitations through content analysis. Spotify employs convolutional neural networks—architectures particularly effective for processing audio—to analyze the actual acoustic properties of tracks. The system extracts features like tempo, key, loudness, danceability, energy, and more abstract timbral qualities. This allows recommending new releases based on sonic similarity to tracks users have enjoyed, independent of popularity or listening history.

Complementing acoustic analysis, Spotify applies natural language processing to the vast corpus of text about music: reviews, blog posts, social media discussions, press coverage. The system identifies descriptive language associated with particular artists and tracks, learning semantic relationships between musical acts. If music journalists frequently describe two artists using similar adjectives, the NLP system infers these artists occupy related positions in musical space. This textual analysis captures cultural positioning and genre relationships that acoustic analysis might miss—the shared ethos of punk bands, for instance, which might not be acoustically obvious.

The integration of these approaches addresses each method’s limitations. Collaborative filtering handles preference prediction well but struggles with new content. Acoustic analysis works for new content but might miss subjective qualities. NLP captures cultural positioning but depends on available text coverage. Together, they provide robust recommendation across diverse scenarios.

Human Guardrails

Notably, Spotify maintains human oversight despite algorithmic sophistication. Engineers implement rules preventing certain recommendation errors that algorithms might make: filtering explicit content from family accounts unless permitted, preventing children’s music from appearing in parents’ personal playlists (a problem that arises because parents play children’s music on their accounts), and flagging recommendations that seem contextually inappropriate.

This human-in-the-loop approach acknowledges that pure optimization for engagement metrics doesn’t always produce desirable outcomes. A system maximizing listening time might recommend increasingly extreme content (as YouTube’s recommendation algorithm has been documented doing), or might create echo chambers reinforcing narrow preferences rather than encouraging musical exploration. Human-defined constraints guide the system toward recommendations that serve longer-term user satisfaction rather than merely maximizing immediate engagement.

The balance between algorithmic autonomy and human oversight represents a broader design question for AI systems. Pure algorithmic control risks optimizing for metrics that don’t align with genuine user welfare. Excessive human intervention sacrifices personalization’s primary advantage—discovering patterns humans wouldn’t consciously identify. Spotify’s approach suggests a middle path: algorithmic pattern discovery constrained by human-defined guardrails encoding values the organization wants the system to respect.

Amazon: Predicting Your Next Purchase

Amazon’s recommendation infrastructure operates at extraordinary scale, analyzing billions of customer interactions to populate virtually every available space on its website and apps with personalized product suggestions. The economic logic is straightforward: customers who discover additional products they want will purchase more than customers who must search explicitly for everything they need.

The technical foundation combines collaborative filtering (customers who purchased these items also purchased those items) with content-based approaches analyzing product attributes. For physical products, content analysis might consider categories, brands, price points, customer reviews, product descriptions, and visual features extracted through computer vision. The system learns which product attributes predict purchase likelihood for different customer segments.

Amazon’s distinctive advantage stems from behavioral data richness. The platform observes not merely purchases but the entire shopping journey: search queries, product page views, time spent examining particular items, items added to cart then removed, comparison shopping patterns, and review reading behavior. This granular data provides much stronger signals about customer preferences than purchase history alone. A customer who views dozens of kitchen appliances but purchases only one still reveals considerable information about their preferences and decision criteria.

The recommendation engine serves multiple strategic purposes beyond immediate conversion. It exposes customers to Amazon’s catalog breadth, ensuring they associate Amazon with diverse product categories rather than only the narrow range they’ve purchased previously. It promotes higher-margin items and products where Amazon holds inventory advantages. It enables cross-category selling, introducing customers who typically purchase books to Amazon’s electronics offerings, for instance.

However, Amazon’s approach also illustrates potential tension between recommendation system goals and user experience. The algorithmic objective—maximizing purchases—doesn’t necessarily align with maximizing customer welfare. A system optimized purely for conversion might recommend impulse purchases customers later regret, or might prioritize products with higher margins over products offering better value. Amazon balances these competing objectives partially through incorporating customer satisfaction signals (returns, negative reviews) into its models, but the fundamental tension persists.

The personalization extends beyond product recommendations to search result ranking and even pricing (though Amazon has publicly stated it doesn’t engage in individualized pricing discrimination). A customer searching “laptop” receives results ordered according to predicted purchase likelihood given their browsing and purchase history. This creates superior user experience by surfacing relevant results quickly, but also raises questions about transparency and potential manipulation.

YouTube: The Infinite Right Sidebar

YouTube’s recommendation system faces a distinct challenge: maintaining user engagement on a platform where attention constantly risks diffusing elsewhere. The infamous right sidebar, perpetually populated with suggested videos, represents YouTube’s primary mechanism for extending viewing sessions. Users rarely arrive at YouTube with specific multi-hour viewing intentions; they come for particular videos, then get drawn into extended watching through algorithmic suggestions.

The technical implementation combines collaborative filtering (users who watched this video also watched these videos) with deep neural networks analyzing video content, viewing patterns, and user context. The system considers recency (what you watched recently matters more than what you watched years ago), device type (viewing patterns differ between mobile and television), time of day, and session context (how you arrived at your current video).

YouTube’s algorithm has evolved repeatedly in response to criticism about promoting increasingly extreme or low-quality content. Early implementations optimized primarily for clicks and watch time, which inadvertently incentivized clickbait and sensationalism. Users clicked on provocative titles and thumbnails, generating engagement metrics the algorithm interpreted as success signals, even when users felt manipulated or dissatisfied afterward.

More recent versions incorporate viewer satisfaction surveys, diversification mechanisms (intentionally recommending content somewhat different from viewing history to prevent echo chambers), and authoritative source boosting for news and health content. These modifications acknowledge that pure engagement optimization doesn’t align with user welfare or platform sustainability. A system that maximizes immediate viewing might undermine long-term satisfaction if users feel they’ve wasted time on low-quality content.

The business model alignment matters considerably. YouTube (owned by Google) generates revenue through advertising, making watch time directly monetizable. This creates stronger incentive for engagement maximization than for platforms with subscription models like Netflix, where retention matters more than daily usage intensity. The algorithmic objectives differ accordingly, with YouTube more willing to recommend marginally relevant content if it extends sessions.

The societal implications of YouTube’s recommendation system have attracted substantial scrutiny. The algorithm’s tendency to recommend increasingly extreme content—documented across political, health, and conspiracy theory domains—suggests that engagement optimization without careful constraint produces socially harmful outcomes. Users searching for mainstream political content reportedly receive recommendations for increasingly partisan or extreme material. Health queries lead to recommendations for pseudoscientific content. These patterns emerge not from intentional bias but from engagement patterns: extreme content often provokes stronger reactions and longer viewing sessions.

YouTube’s subsequent modifications illustrate a broader principle: recommendation systems require ongoing governance addressing unintended consequences rather than one-time optimization. The system’s objectives must be continually refined as the social context evolves and as second-order effects become apparent.

Social Media: Connecting the Dots

Social platforms including Facebook, Twitter, LinkedIn, and Instagram employ recommendation engines not primarily for content but for social connections—suggesting people to follow, groups to join, or pages to like. These recommendations serve to increase platform engagement by expanding users’ network connections and content streams.

The technical approaches mirror those used for content recommendation but adapted for social graphs. The systems identify users with similar connection patterns (collaborative filtering applied to friend networks rather than content preferences) and recommend connecting to people your contacts know. They analyze profile information, interaction patterns, and content preferences to suggest accounts you might find interesting even without mutual connections.

Facebook’s “People You May Know” feature exemplifies this approach while also illustrating privacy concerns. The system reportedly uses not only mutual friends and profile information but also contact list uploads, location data, and group memberships. Users have reported receiving friend suggestions for people they know only from specific offline contexts—therapists’ other patients, for instance—suggesting the system infers relationships from subtle signals like location proximity or app usage timing.

These inferences, while technically impressive, can feel invasive. Users may not want their various social contexts connected—professional and personal identities, different friend groups, online and offline relationships. A recommendation engine that connects these domains serves platform engagement goals (more connections generate more content and more time on platform) but potentially violates users’ preferences for context separation.

Twitter’s (now X’s) recommendation of accounts to follow similarly aims to increase engagement by ensuring users have adequate content streams. The system considers accounts followed by people you follow, trending accounts in your demographic or interest categories, and accounts whose content you engage with through likes or retweets. Recent algorithmic changes have expanded recommendations to include content from accounts you don’t follow, essentially converting the platform from a curated social network into a hybrid recommendation feed.

The transition from user-controlled feeds (showing only content from explicitly followed accounts) to algorithmically curated feeds (mixing followed content with recommended content) represents a broader industry shift. Platforms argue this improves discovery and engagement. Critics contend it reduces user agency and exposes users to content they didn’t request. The debate reflects fundamental tension between platform business models—which benefit from maximum engagement—and user preferences for control over their information environment.

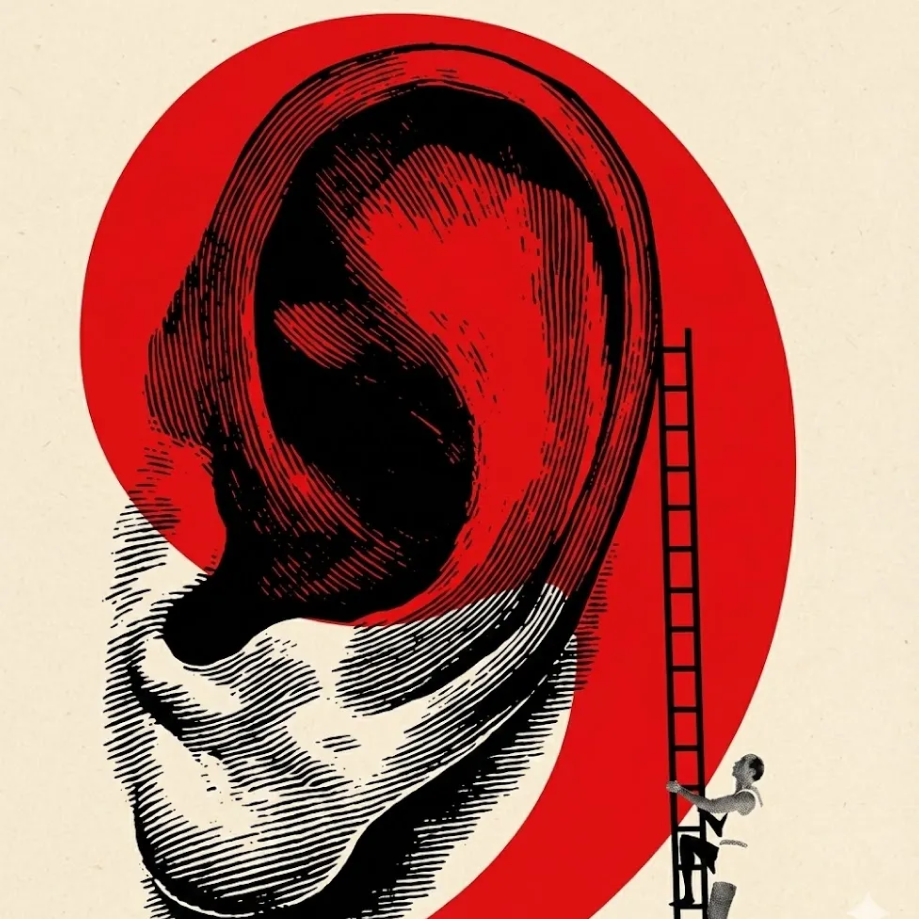

Why the Best AI Recommendations Require Great UX

Technical sophistication in recommendation algorithms does not automatically translate into positive user experience. The most accurate predictions prove worthless if they feel intrusive, inexplicable, or untrustworthy. The gap between algorithmic capability and user acceptance often determines commercial success more than algorithmic accuracy improvements beyond adequate thresholds.

The “black box” problem represents the central challenge. Deep learning systems achieve superior accuracy partially by learning abstract patterns that don’t correspond to human-comprehensible concepts. A neural network might learn that some combination of viewing patterns, demographic factors, and content attributes predicts satisfaction, but this learned representation exists only as numerical weights in a high-dimensional space. The system cannot explain in human terms why it recommended a particular item beyond superficial categorization.

This opacity creates justified skepticism. Users cannot evaluate whether recommendations reflect their actual preferences or some algorithmic artifact. When recommendations seem inexplicable or inappropriate, users cannot provide meaningful feedback beyond “I don’t like this,” offering the system minimal information for improvement. The lack of transparency prevents users from developing accurate mental models of system behavior, which would allow them to better control and benefit from personalization.

Trust in AI systems, according to established frameworks, requires users to believe systems will perform successfully without unexpected outcomes or privacy violations. This trust accumulates gradually through consistent positive experiences but collapses rapidly when systems behave inappropriately. The asymmetry creates conservative design imperatives: systems should under-promise and over-deliver rather than claiming capabilities they cannot consistently achieve.

Several design principles address trust-building in recommendation systems:

Provide meaningful control. Spotify’s “private session” mode prevents listening during that session from influencing future recommendations—useful when exploring unfamiliar genres or letting children use the account. Netflix allows removing items from viewing history and rating titles to improve recommendations. These controls acknowledge that algorithms cannot perfectly infer preferences from behavior alone and that users sometimes engage with content for reasons unrelated to preference.

Explain recommendations transparently. Even when full algorithmic explanation proves impossible, simple rationales improve user experience. “Because you watched X” or “Popular with viewers like you” provides minimal explanation but helps users understand the recommendation’s basis and evaluate its relevance. More sophisticated explanations might indicate confidence levels or identify which factors most influenced the recommendation.

Enable easy feedback. “Not interested” or “Don’t recommend this” buttons allow users to correct algorithmic mistakes without complex intervention. Effective systems learn from this feedback rapidly rather than requiring repeated corrections of the same error.

Default to privacy-preserving behavior. Recommendations should require opt-in rather than opt-out for sensitive categories. A health app should not begin recommending weight loss content unless users explicitly indicate interest; making such recommendations unprompted risks causing harm to users with eating disorders while solving no actual problem.

Acknowledge limitations explicitly. Systems should indicate when recommendations carry high uncertainty or when relevant data is missing. A new user should understand recommendations will improve as the system learns their preferences rather than interpreting early poor recommendations as evidence of fundamental system inadequacy.

The distinction between good and bad recommendation UX often appears in subtle details rather than major architectural differences. Two systems with identical underlying algorithms can produce dramatically different user experiences based on interface design, explanation quality, control granularity, and privacy defaults. These factors determine whether users experience personalization as helpful service or invasive surveillance.

The balance between personalization value and privacy cost varies substantially across individuals and contexts. Some users enthusiastically embrace deep personalization, willingly sharing detailed data to receive better recommendations. Others prefer minimal personalization, accepting less relevant suggestions to maintain privacy. Effective systems accommodate this variation through graduated privacy settings rather than forcing binary choices between full personalization or none.

The emerging design principle suggests that recommendation systems should make their data practices transparent and their controls accessible while defaulting to conservative behavior. Users who want aggressive personalization can opt in; users who prefer privacy can remain in default settings without constant intrusion. This approach respects user autonomy while enabling those who value personalization to access its benefits.

Conclusion: The Future of Personalization in 2025

Recommendation engines have evolved from novelty features into central components of digital platforms, fundamentally shaping how billions of people discover content, products, and connections. The technical sophistication of contemporary systems—integrating neural networks, natural language processing, computer vision, and massive-scale data processing—enables personalization accuracy that would have seemed impossible a decade earlier.

Yet accuracy alone explains neither success nor failure. The most sophisticated algorithm proves commercially worthless if users distrust it, find it invasive, or cannot understand its behavior. Conversely, relatively simple recommendation approaches can succeed when embedded in thoughtful user experiences that build trust, provide control, and respect privacy boundaries.

The pattern observed across Netflix, Spotify, Amazon, YouTube, and social platforms suggests several consistent principles. Successful systems combine multiple algorithmic approaches rather than relying on any single technique. They incorporate human oversight preventing obviously inappropriate recommendations that pure engagement optimization might produce. They provide user controls allowing correction of algorithmic errors and accommodation of privacy preferences. They explain recommendations sufficiently that users can evaluate relevance without requiring full algorithmic transparency.

The principle articulated throughout this analysis—”if AI doesn’t work for people, it doesn’t work”—carries particular weight for recommendation systems precisely because their value proposition depends entirely on user acceptance. A recommendation engine optimized purely for technical accuracy metrics while ignoring user experience will likely underperform a less sophisticated system designed with human factors central from the outset.

The trajectory toward 2025 and beyond suggests continued technical advancement in recommendation algorithms as machine learning methods improve and as platforms accumulate richer data about user preferences and context. Deep learning architectures will likely become more sophisticated, better at integrating diverse data types and at learning nuanced preference patterns. Yet this technical progress will matter commercially only insofar as it translates into user experiences that feel helpful rather than manipulative, empowering rather than constraining.

The competitive advantage increasingly lies not in algorithmic sophistication—which tends toward commodification as methods diffuse across the industry—but in design decisions about how algorithms interact with users. The platforms that succeed will be those that recognize recommendation systems as mediators of human experience rather than merely as optimization problems, and that design accordingly with human psychology and preferences central rather than peripheral.

For those building personalized systems, the practical implication is clear: invest as heavily in user experience research and design as in algorithmic development. Understand how users perceive and respond to recommendations. Provide meaningful control over personalization intensity. Default to privacy-respecting behavior. Explain system decisions at least minimally. Acknowledge limitations explicitly. These design choices determine whether sophisticated algorithms become valuable services or ignored features.

The invitation extends to users as well: explore the data settings and recommendation controls in the platforms you use. Most services provide more control over personalization than users realize, though accessing these controls often requires navigating preference menus that platforms don’t prominently advertise. Understanding what data feeds recommendations and exercising available controls can substantially improve the relevance of suggestions while maintaining privacy boundaries you find comfortable.

The future of personalization depends less on continued algorithmic advancement—though that will certainly occur—than on developing social and design conventions for how personalized systems should behave. What degree of inference is helpful versus creepy? How should systems acknowledge uncertainty? What controls should users have? These questions have no purely technical answers. They require ongoing negotiation between system capabilities and human preferences, between commercial objectives and user welfare, between personalization’s benefits and its costs. The platforms that navigate these tensions thoughtfully will shape not merely their own success but the broader trajectory of how humans experience increasingly intelligent systems.

Reply